Assuming the Risk

When I worked in product management, we made a continuous effort to simplify applications. The more that can be done with less, the easier a system is to maintain. Newspapers employ editors for similar reasons, and authors work with publishers.

Simplifying things is hard. My old politics tutor said the only apology he would accept was for not writing a shorter essay.

This is why I raise a wry smile when someone tells me how many lines of code they generated. People boast about using AI to produce more than any human ever could. Okay, but what is the outcome of that code? How is your life better because of it and, if you are in business, how are other people’s lives better?

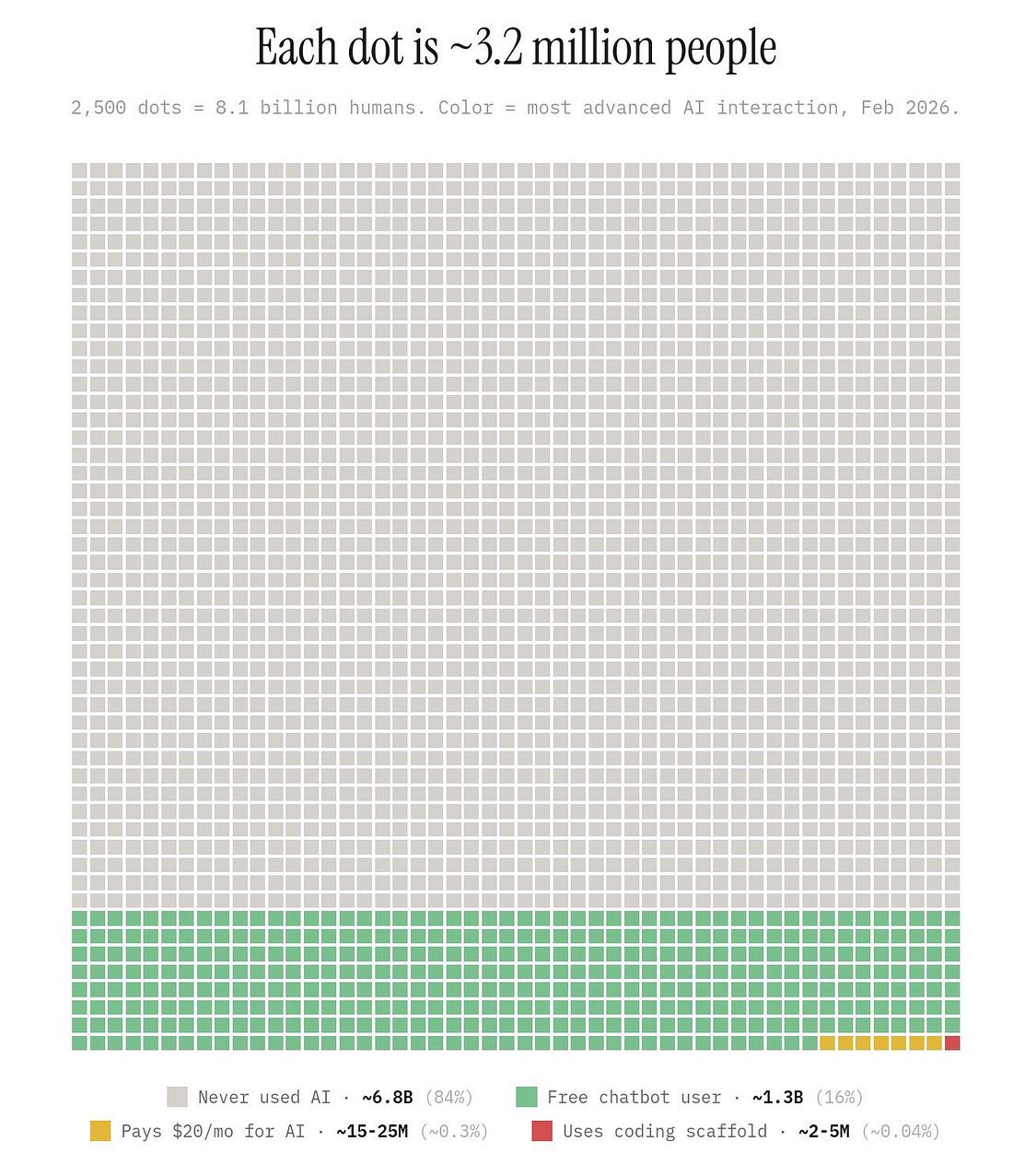

By now you have probably seen the graph showing AI adoption against all the people who have never used it. There is one square in the bottom right corner representing those who use AI for coding. The world is not being overrun by vibe-coding entrepreneurs.

Source: Noah Epstein on X

At the same time, coding is the most common use of Claude. Analysis of API calls shows that up to half are to automate coding tasks. The AI optimist’s view is that there are billion-dollar companies waiting to be built in areas such as finance and education, where there are far fewer calls. Low adoption is the opportunity. Meanwhile, AI realists say this is normal technology that takes time to diffuse through the economy. Both may be right, but who will do the building?

One of the companies we partner with at MSBC has built its own CRM. It was using HubSpot but found that it did not deliver the precise visualisation the business needed. Being a technology company, it made sense to create its own CRM.

Most companies are not going to do this. Building software is complex. Any programs that involve customer data or perform automated functions run into legal and financial complications. That is why when you sign up for software there are extensive terms and conditions that you scroll past and tick the box. The distribution of liability is hidden in the small print. When you build your own software, the risk is all yours.

Legal and compliance issues are expensive to address. Companies gain economies of scale from solving problems and selling solutions to many others. Software companies are no different. If you code your own applications, then you may lack the scale to recoup your costs.

Managing the Risk

There are examples of successful in-house applications. An ETF investment company in the western US reduced the need to subscribe to Bloomberg and FactSet. The benefit arose because these suppliers are expensive middlemen who provide convenience but do not remove compliance risk. The company built a cheaper tool, not a better one.

When you ask AI to design a software application, where does it get the template from? It is not designing from first principles, taking into consideration your company’s intricate needs. It is copying a template from an open-source library. You are getting a standard version of software that still needs fitting to your business.

If you have an engineering team, they can access open-source software and adapt it to your business. If you do not, then we have a thriving business at MSBC that does exactly this. We take care of compliance and guardrails and have over 20 years of experience doing this in your industry. This is less about scale, because each of our clients is different, and more about experience and know-how.

If you vibe-code an application, then you still need engineers to update and maintain it. You change their job from building to instructing AI and then fitting the output to your business. This is what we are seeing in surveys and on social media.

A number of technology companies have said that humans no longer write code. While at first it was assumed this would mean fewer engineers, this is not panning out.

There are two long-term trends that are almost uninterrupted by the launch of LLMs. The first is the gradual reduction in hardware engineers. Hardware is capital-intensive and increasingly a thin layer on which software runs. For example, NVIDIA has a suite of frameworks and models that allow users to optimise its GPUs.

This creates demand for software engineers. The reduction in numbers after ChatGPT’s release looks like a blip. Software is applied logic and has no boundaries. Whether engineers are coding or operating, demand for them grows as there are few limits on what they can do.

Capitalism Unchanged

When you pay a software company for its application, you are transferring your risk to them. Software is about solving problems, assuming risk, and spreading the cost across many customers.

When you code your own software, you are copying a commercial version and assuming some of the risks associated with it. You still require a team to manage and maintain the deployment.

Being able to generate vast quantities of code places a premium on planning and maintenance. It is little wonder those working with AI claim to be working harder than ever. Just because you can do more, does not mean it is better to do so. Specialisation is a cornerstone of capitalism that remains unchanged.

Questions to Ask and Answer

What workflows would you most like to automate?

Are these standard workflows or custom outcomes?

What are the associated legal and financial risks?